Have you ever wondered what goes into designing and building a robot? In an age of seemingly exponential technological growth, robots are becoming more and more commonplace. On land, robots have excelled at tasks such as assembly line manufacturing, warehouse logistics, and even household chores. Engineers and researchers are now designing robots capable of exploring other environments such as the ocean. The use of robots underwater can aid humans in many tasks, including engineering projects like offshore construction. This approach significantly improves safety by removing humans from often dangerous situations, while also increasing efficiency. However, the underwater environment differs dramatically from land, posing many challenges for researchers.

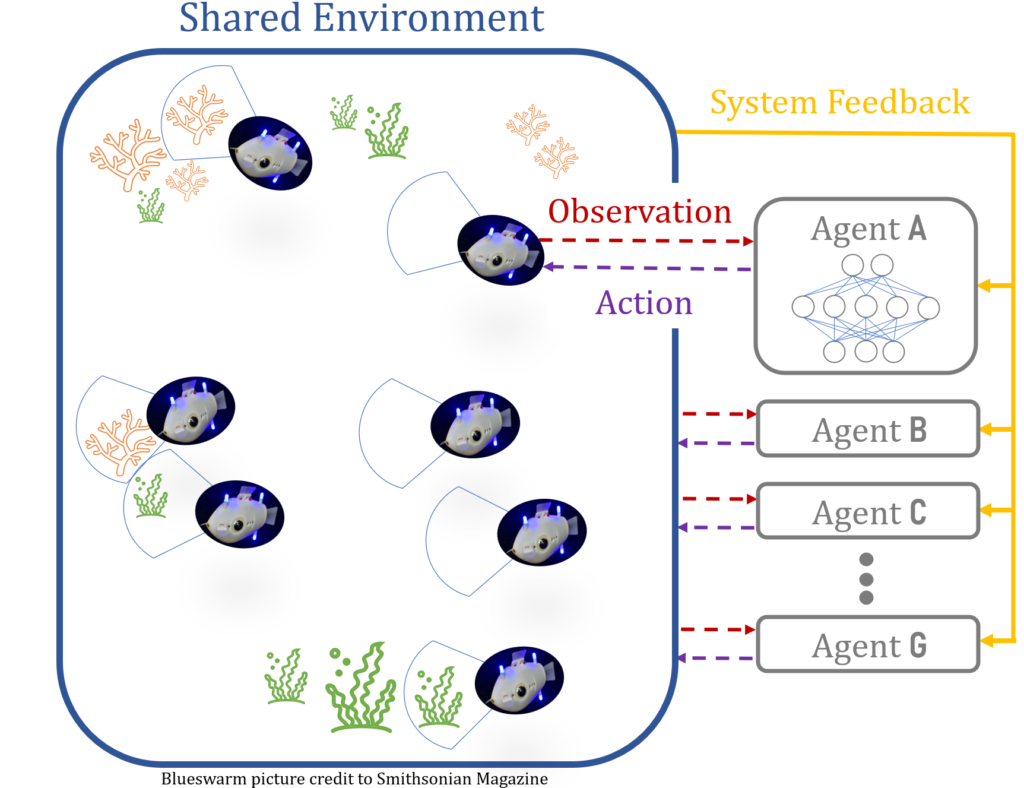

Akshaya Agrawal, a fifth-year PhD candidate in the Robotics Department at Oregon State University’s College of Engineering, is tackling the challenges of implementing robots underwater. Ocean currents create drag—up to 800 times greater than what we experience on land—and communication signals like Wi-Fi do not travel well underwater, making it difficult to localize (determine the robot’s exact position). Akshaya’s research involves developing and testing motion-planning algorithms designed to help teams of robots coordinate movement and perform tasks underwater. She utilizes realistic simulations to assess the performance of robots underwater, coupled with laboratory tests on ground-based robots, and plans to transition to an underwater testing phase in the future.

Akshaya’s passion for engineering and robotics began at an early age. Her journey from India to Oregon is inspirational, as is how she’s redefining the academic landscape for women in robotics. To hear more about her story, all the cool robots she’s working with, and the steps involved in getting them underwater, tune in to KBVR 88.7 FM this Sunday, Feb. 2. You can listen to the episode anywhere you listen to your podcasts, including on KBVR, Spotify, Apple, or anywhere else!