The Skill Students Resist, and Employers Expect

I have a confession. When I was earning my M.S.Ed. online, I dreaded seeing “group project” on a syllabus. I juggled coursework with a full-time job, logged in at odd hours, and did most of the work on every collaborative assignment. My standards were higher, my contributions more substantial, my late nights longer. I carried the team.

Here’s the awkward part: so did everyone else.

Researchers have been studying this phenomenon since 1979, when psychologists Michael Ross and Fiore Sicoly found that group members consistently overestimate their own contributions (Ross & Sicoly, 1979). When you ask everyone on a team to estimate their percentage of the work, the numbers don’t add up to 100%; they add up to 114% on six-person teams. In studies of scientific coauthors, that number balloons to 167%. The bigger the team, the worse it gets: eight-person groups claim a collective 140% of the credit (Schroeder et al., 2016).

So if you or your students have ever finished a group project thinking I’m the only one who pulled my weight, congratulations. You’re not a uniquely burdened hero. You’re experiencing a well-documented cognitive bias known as egocentric overclaiming. And so is every other person on the team.

If you’ve ever been on the receiving end of student complaints about group work, this bias is a big part of why. Each student genuinely remembers their own effort more vividly than anyone else’s. The frustration is real, even when the workload was more balanced than it felt.

Why Collaboration Belongs in Online Course Design

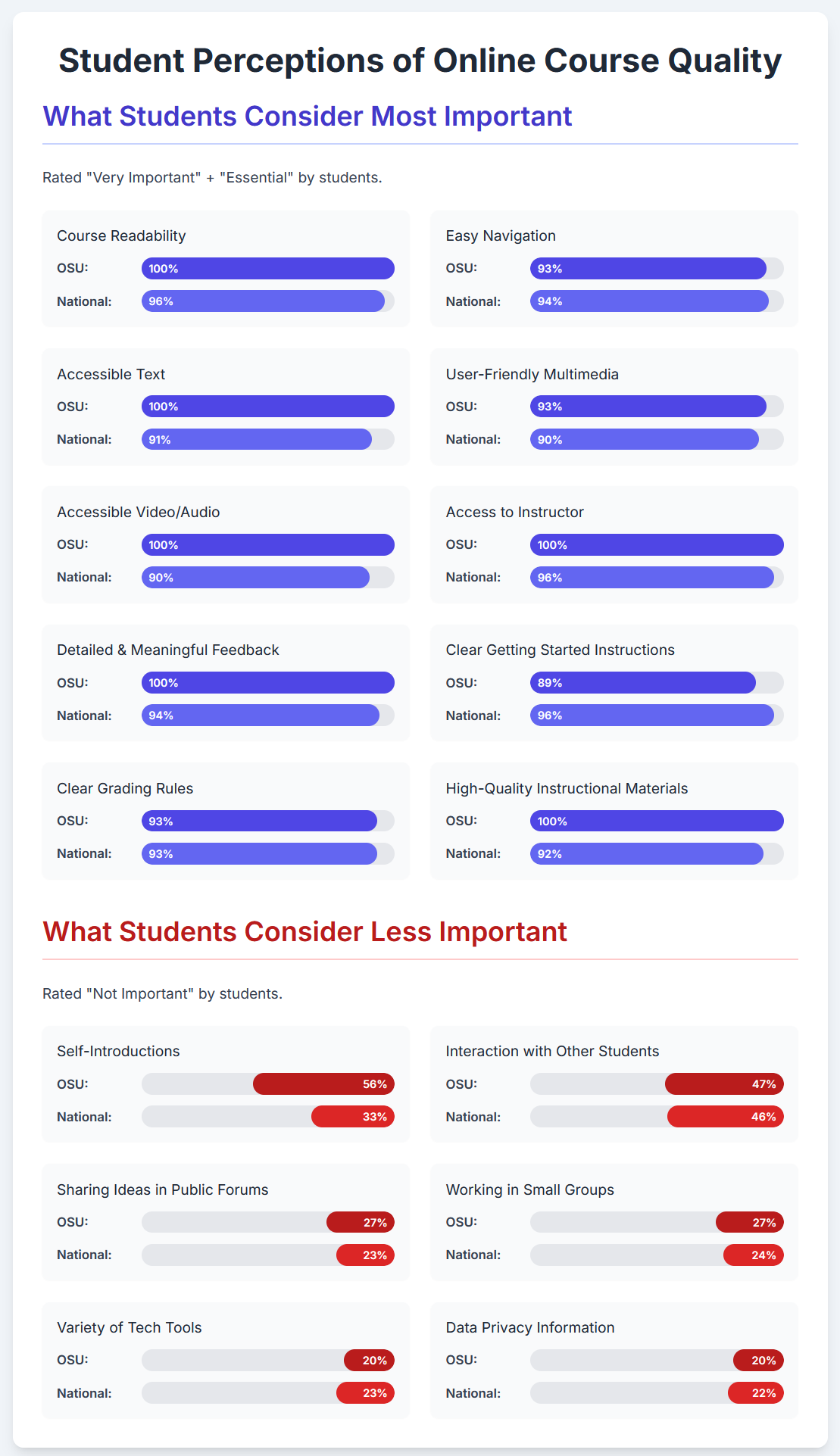

That frustration is one reason faculty hesitate to assign collaborative work, and one reason students dread it. When the experience feels unfair (even when it isn’t), it’s hard to see the value. And yet, employers keep saying collaboration is exactly what they need. NACE consistently ranks teamwork among the top competencies employers seek (NACE Job Outlook, 2025). And with the rise of remote and hybrid work, the ability to collaborate asynchronously with a distributed team isn’t a nice-to-have; it’s the job.

Here’s what I find most compelling, and what I didn’t appreciate until years after finishing my own degree: the asynchronous collaboration I did as an online student may have been closer to real workplace collaboration than any in-person group project. Navigating shared documents, negotiating timelines with classmates in different time zones, and giving written feedback to peers I rarely interact with face-to-face, I was rehearsing exactly the skills I now use every day in a distributed professional team. I just didn’t know it at the time.

And the data supports this. Research published in the Journal of Asynchronous Learning Networks found that online programs emphasizing student engagement and collaborative practices achieved completion rates of 85% or higher, meeting or exceeding those of their face-to-face counterparts. Faculty and instructional designers are paying attention, and so are employers. The question, then, isn’t whether to include collaboration in online courses. It’s about designing it so students experience it as preparation rather than punishment.

Making It Work: Practical Strategies for Course Design

None of this means we should assign a group project and hope for the best. When collaboration is poorly designed with vague instructions, uneven accountability, and misaligned outcomes, student frustration is justified. Here are approaches that work across disciplines:

Start with community, not content. Introductory discussions can feel formulaic, “share your name, major, and a fun fact”, but they don’t have to be. When students share their backgrounds, goals, and even their anxieties about the course in week one, it lays the groundwork for everything collaborative that follows. The shift is subtle but real: from “strangers assigned to a group” to “people who know something about each other.”

Be intentional about team formation. Random group assignment is easy, but a little structure goes a long way. Consider using surveys to match students by schedule availability, working style, or shared interests. Some institutions use dedicated tools , at OSU, for example, Ecampus offers a group finder tool , while others have students use AI to synthesize individual preferences into a working team charter. The goal is the same regardless of approach: help students start from common ground rather than cold introductions.

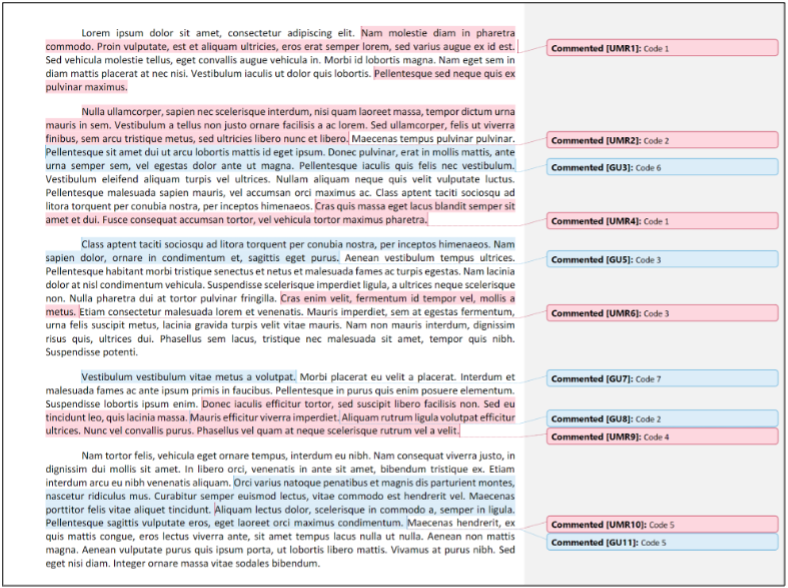

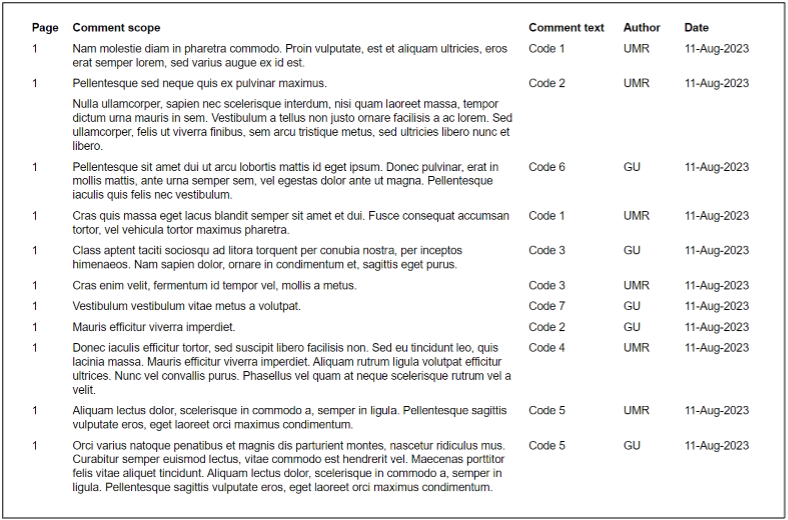

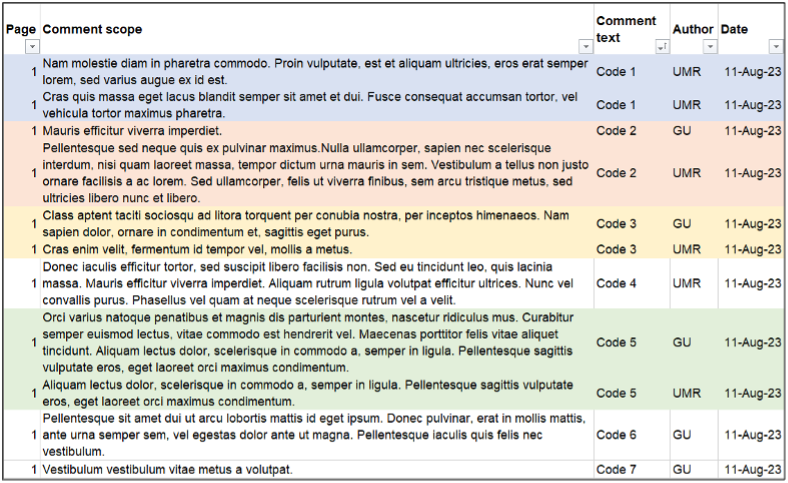

Make contributions visible. Remember that overclaiming bias? One of the best ways to counteract it is to build in structured peer evaluation of team contributions, not a review of each other’s papers, but an honest assessment of how each member showed up for the group. When teammates rate each other on dimensions like participation, communication, and follow-through, it creates accountability and gives instructors insight they wouldn’t otherwise have. At OSU, Ecampus has developed a custom tool for this purpose. CATME is a widely used option, and even a well-designed Google Form with clear criteria can do the job. However you implement it, the point is to give students a structured way to reflect on, and be accountable for, their collaboration.

Design for real-world parallels. In the sciences, interdisciplinary teams can tackle complex problems that mirror real-world research collaborations by designing solutions, analyzing data, and presenting findings as a group. In business courses, teams can build a business plan or marketing strategy together, or work through a case competition where they analyze a real scenario and present recommendations. In the humanities, students can collaborate on oral history projects or produce a podcast episode together, work that requires negotiation, shared decision-making, and a tangible product. The key is making the collaboration itself part of the learning outcome, not just a delivery mechanism.

A Better Way to Think About It

The next time a student groans about a group project or a colleague pushes back on interaction requirements, it might help to share the research on overclaiming. Not to dismiss their frustration, but to reframe it: the discomfort of collaboration isn’t a design flaw. It’s the learning happening.

And if they still insist they did most of the work? Well. So did everyone else.

References

- Herz, N., Dan, O., Censor, N., & Bar-Haim, Y. (2020). Authors overestimate their contribution to scientific work, demonstrating a strong bias. Proceedings of the National Academy of Sciences, 117(12), 6282–6285.

- Moore, J. C., & Fetzner, M. J. (2009). The road to retention: A closer look at institutions that achieve high course completion rates. Journal of Asynchronous Learning Networks, 13(3), 3–22.

- National Association of Colleges and Employers. (2025). Job outlook 2025. https://www.naceweb.org/career-readiness/competencies/

- Ross, M., & Sicoly, F. (1979). Egocentric biases in availability and attribution. Journal of Personality and Social Psychology, 37, 322–336.

- Schroeder, J., Caruso, E. M., & Epley, N. (2016). Many hands make overlooked work: Over-claiming of responsibility increases with group size. Journal of Experimental Psychology: Applied, 22(2), 238–246. See also: Why teams overinflate their contributions. Chicago Booth Review. https://www.chicagobooth.edu/review/why-teams-overinflate-their-contributions