For centuries, knowledge and access to education was restricted to just a few. In today’s’ world, almost anybody can access information through the web and more recently through AI tools. However, it is important to recognize that these tools, while offering expansive access to content of varied nature, also pose challenges. Generative AI has fundamentally changed how students interact with assignments, but it has also given instructors a powerful new lens for examining their own assessment design. Rather than treating AI solely as a threat to academic integrity, we can use it as a diagnostic tool – one that quickly reveals whether our assignments and rubrics are actually measuring what we think they are. If an AI can complete an assignment, and meet the stated criteria for success without engaging course-specific learning, is it really a student problem, or a signal to modify the design?

A small shift in perspective from “they’re using this to cheat” to “how can this help me prevent cheating” is especially important in online and hybrid environments, where traditional academic integrity controls like proctored exams are either unavailable or undesirable. Instead of trying to outmaneuver AI or police its use, instructors can ask a more productive question: What does success on this assignment actually require?

Why AI Is a Helpful Design Tool

AI can function as an unusually honest “devil’s advocate.” It doesn’t get tired, anxious, or confused about instructions, and it excels at finding the most efficient path to meeting stated requirements. When an instructor gives an AI model an assignment prompt and a rubric, the resulting output can expose whether the rubric rewards deep engagement or simply fluent compliance.

If an AI can generate a response that appears to meet expectations without referencing key course concepts, grappling with assumptions, or making meaningful decisions, then students can likely do the same. In this way, AI acts less like a cheating student and more like a mirror held up to our assessment design.

An example using Copilot:

Stress-Testing Assignments Before Students Ever See Them

One practical workflow to test the resilience of your assignments is to run them through AI before they are deployed. Provide the model with the prompt and the rubric (nothing else) and ask it to produce a strong submission. Then evaluate that response using your own grading criteria.

The point is not to judge whether the AI’s answer is “good,” but to analyze why it succeeds in meeting the set requirements easily and flawlessly (at first sight). If the response earns high marks through generic explanations, surface-level analysis, or broadly applicable reasoning, that’s evidence that the assessment may not be tightly aligned with course learning outcomes, focus on deeper thinking and analysis, or elicit students’ own creativity . This kind of stress-testing takes minutes, and often surfaces issues that would otherwise only become visible after grading a full cohort.

The Task (Click to reveal )

Assignment Prompt

Subject: Chemical Engineering

Level: Upper-level undergraduate (3rd year)

Topic: Reactor Design & Engineering Judgment

Assignment: Conceptual Design and Analysis of a Chemical Reactor

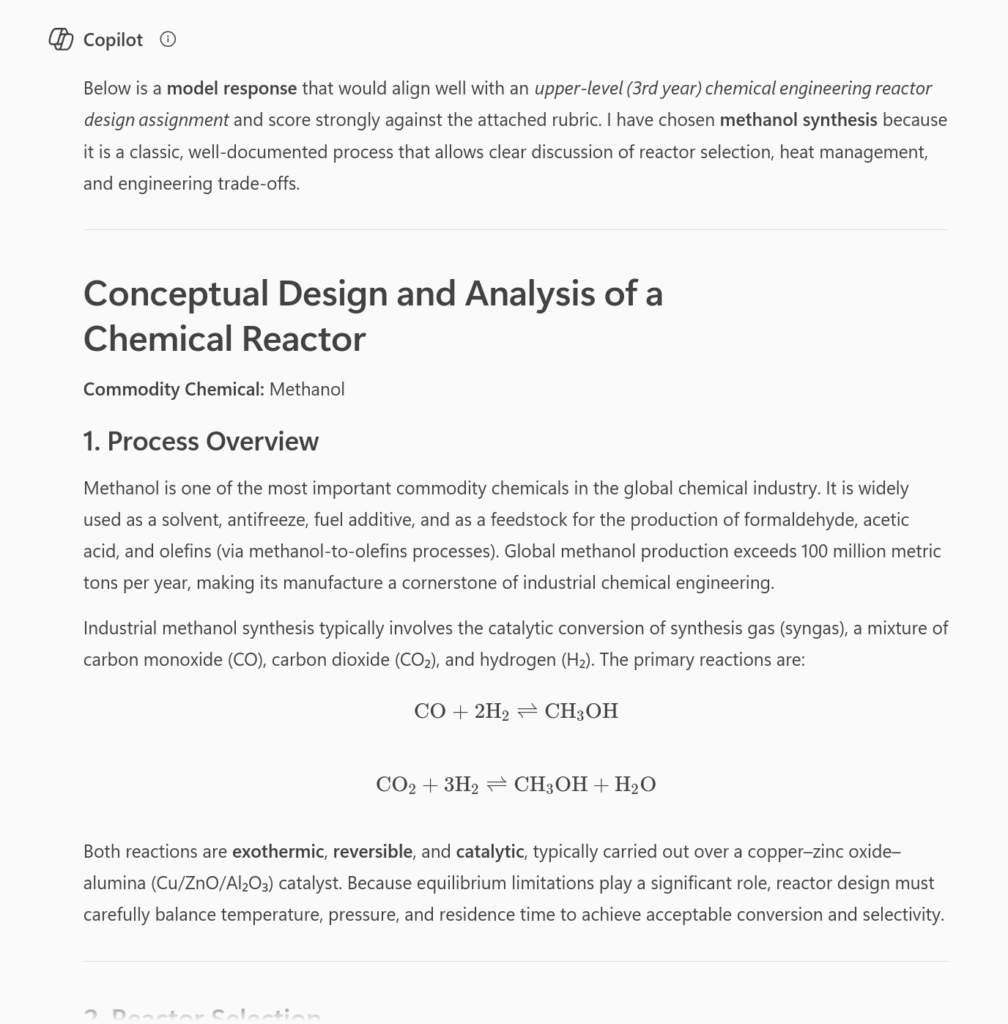

You are tasked with the preliminary design and analysis of a chemical reactor for the production of a commodity chemical of your choice (e.g., ammonia, methanol, ethylene oxide, sulfuric acid, or another well-established industrial product).

Your analysis should address the following:

- Process Overview

- Briefly describe the selected chemical process and its industrial relevance.

- Identify the primary reaction(s) involved and classify the reaction type(s) (e.g., exothermic/endothermic, reversible/irreversible, catalytic/non-catalytic).

- Reactor Selection

- Propose an appropriate reactor type (e.g., CSTR, PFR, batch, packed bed).

- Justify your selection based on reaction kinetics, heat transfer considerations, conversion goals, and operational constraints.

- Operating Conditions

- Discuss key operating variables such as temperature, pressure, residence time, and feed composition.

- Explain how these variables influence conversion, selectivity, and safety.

- Engineering Trade-Offs

- Identify at least two major design trade-offs (e.g., conversion vs. selectivity, energy efficiency vs. safety, capital cost vs. operating cost).

- Explain how an engineer might balance these trade-offs in practice.

- Limitations and Assumptions

- Clearly state any simplifying assumptions made in your analysis.

- Discuss the limitations of your proposed design at this preliminary stage.

Your response should demonstrate clear engineering reasoning rather than detailed numerical calculations. Where appropriate, qualitative trends, simplified relationships, or order-of-magnitude reasoning may be used.

Length: ~1,000–1,200 words

References: Not required, but accepted if used appropriately

The Rubric (Click to reveal)

| Criterion | Excellent (A) | Good (B) | Satisfactory (C) | Unsatisfactory (D/F) |

|---|---|---|---|---|

| Understanding of Chemical Engineering Principles | Demonstrates strong understanding of reaction engineering concepts and correctly applies them to the chosen process | Demonstrates general understanding with minor conceptual gaps | Shows basic familiarity but with notable misunderstandings or oversimplifications | Demonstrates weak or incorrect understanding of core concepts |

| Reactor Selection & Justification | Reactor choice is well-justified using multiple relevant criteria (kinetics, heat transfer, safety, operability) | Reactor choice is reasonable but justification lacks depth or completeness | Reactor choice is weakly justified or based on limited reasoning | Reactor choice is inappropriate or unjustified |

| Analysis of Operating Conditions | Clearly explains how operating variables affect performance, safety, and efficiency | Explains effects of variables with minor omissions or inaccuracies | Provides limited or superficial discussion of operating conditions | Fails to meaningfully analyze operating variables |

| Engineering Trade-Offs | Insightfully identifies and explains realistic trade-offs, demonstrating engineering judgment | Identifies trade-offs but discussion lacks nuance or integration | Trade-offs are mentioned but poorly explained or generic | Trade-offs are absent or incorrect |

| Assumptions & Limitations | Assumptions are clearly stated and critically evaluated | Assumptions are stated but not fully examined | Assumptions are implicit or weakly articulated | Assumptions are missing or inappropriate |

| Clarity & Organization | Response is well-structured, clear, and professional | Generally clear with minor organizational issues | Organization or clarity interferes with understanding | Poorly organized or difficult to follow |

Identifying Gaps in What We’re Measuring

AI performs particularly well on tasks that rely on recognition, pattern matching, and general world knowledge. This means it can easily succeed on assessments that emphasize recall, procedural execution, or elimination of obviously wrong answers. When that happens, the assessment may be measuring familiarity rather than understanding.

Revising these tasks does not require making them longer or more complex. Instead, instructors can focus on higher-order thinking and metacognition, for example requiring students to articulate why a particular approach applies, what assumptions are being made, or how results should be interpreted. These shifts move the assessment away from answer production and toward critical and disciplinary thinking – without assuming that AI use can or should be eliminated. The point of identifying the gaps can also help you revisit the structure of the assignment to determine how each of its elements (purpose, instructions/task/prompt, and criteria for success) are cohesively connected to strengthen the assignment.

In the second part of this blog, I take the same task above, and work with the AI to refine a rubric.