I’m currently part of a student project developing a simulated self-driving vehicle. The project leverages AirSim which is a framework for developing simulated driving of cars and flying of drones.

Understanding how to separate this generalized project into something a group with little experience can digest has been an ongoing problem. Struggling with the basics of Reinforcement Learning has been intense but much has been learned.

Algorithmic Approach

One of the approaches we have selected is the use of a DQN algorithm. A Deep Q-Network (DQN) combines a Q-Learning algorithm with a deep neural network.

We implement the DQN, starting the learning after 20,000 steps. We’re still experimenting with this number as a DQN algorithm needs a fair amount of time to get a useful result so dialing this in will be essential.

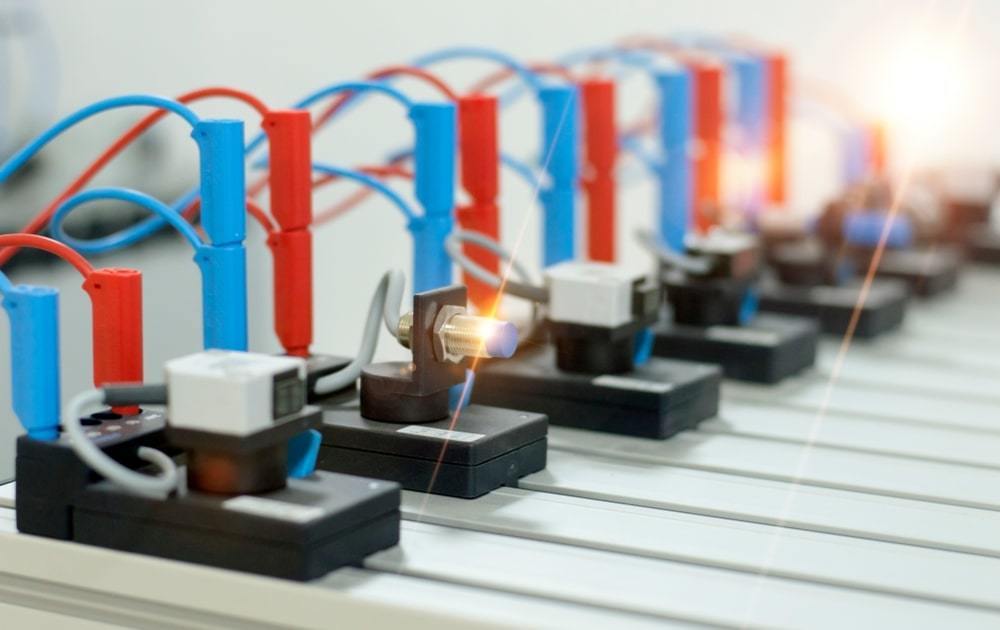

Sensors

Next, we have the sensors. The distance sensor is a simulated infrared sensor, used to detect the distance of things in the local area. Local in this case means around 40 meters (40 unreal units). We also have GPS and camera sensors.

Taking data from each of these sensors, we will convert the data to relevant NumPy arrays, each filled with appropriate information. We will then pass that info to the relevant processing functions which will convert the information to something meaningful for the algorithm. This information will then be used to influence the reward functions and further improve the decision-making and learning of the algorithm.

AirGym

We finally come to AirGym. This is the training environment and is a combination of OpenAI’s gym and AirSim. This will allow for a variety of training possibilities. One of the most exciting possibilities, which we have yet to reach, is an increased rate of training. Currently, the group is struggling with training (true with so many RL/ML projects).

Stay tuned for training updates!